Over the past decade, the volume of research being published across health, education, psychology, and the social sciences has grown at a pace that overwhelms most researchers and practitioners. Anyone who has tried to explore a topic in depth, whether it’s the effectiveness of a therapy, the experience of a patient group, or the impact of a policy, quickly discovers that the challenge is no longer a lack of studies. Instead, the real struggle is making sense of the numerous studies, all employing different research methods, designs, and aims. This is why clear and reliable synthesis methods have become so important in modern research.

When people talk about synthesizing research, two terms often come up: systematic review and meta-synthesis. At first glance, they might look similar because both aim to bring together findings from multiple studies. Yet, these two approaches are built on completely different foundations. A systematic review focuses on gathering and evaluating evidence in a structured, repeatable way to answer specific questions, usually about the effectiveness of an intervention or the strength of an association (Higgins & Thomas, 2022). Meta-synthesis, on the other hand, aims to interpret and re-tell the meaning of people’s experiences by integrating qualitative studies into a deeper, richer understanding (Noblit & Hare, 1988).

The problem is that many students, new researchers, and even some experienced professionals often mix up the two. This confusion affects everything, from how research questions are framed to how evidence is used in practice. In some cases, the wrong method is chosen for the wrong reason, leading to findings that are either too shallow or completely mismatched to the research goal. For instance, using a quantitative-style systematic review to analyze deeply personal qualitative experiences can flatten important nuances. Likewise, trying to answer an outcome-based question using meta-synthesis simply won’t provide the kind of measurable evidence that policymakers or clinicians need.

As evidence-based decision-making becomes a global priority, methodological clarity has never been more essential. Government agencies, NGOs, medical organizations, and educational institutions increasingly rely on synthesized evidence to shape guidelines, funding decisions, and program designs. When the research method is unclear or used improperly, the consequences go far beyond academic misunderstandings; they influence real decisions affecting real people. Glasziou and Chalmers (2018) argue that poor or mismatched research synthesis wastes time, resources, and sometimes even leads to harmful recommendations.

This blog post begins by clarifying these two methods at a foundational level, not just in definition but in purpose. From there, it moves into their processes, strengths, emerging trends, and the types of insights they produce. By the end, the hope is that any reader, whether a student preparing a thesis, a practitioner searching for reliable evidence, or a researcher designing a new project, will feel confident about when and how each method should be used.

1. Conceptual Foundations: What Exactly Is Being Compared?

Before comparing meta-synthesis and systematic review, it helps to understand that these two approaches were built for different purposes and grew out of different research traditions. Although they are often mentioned together, they are not competing methods. They simply answer different kinds of questions, and each one relies on its own assumptions about what counts as knowledge.

Positivist, Evidence-Based Approach

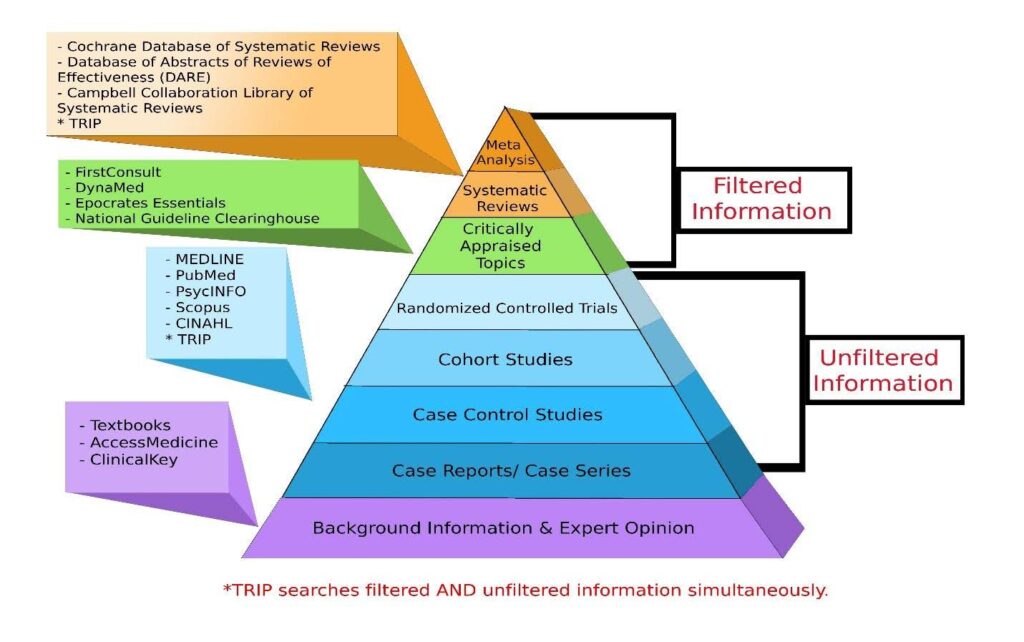

A systematic review is rooted in the tradition of evidence-based practice, where the goal is to collect all available research on a specific question and evaluate it in a structured way. Researchers often use systematic reviews when they want to know whether an intervention works, how strong an association is, or what the overall direction of evidence suggests across multiple studies. The process is based on principles of transparency and reproducibility: every step, from search strategies to criteria for including studies, is documented clearly to reduce bias (Moher et al., 2009). This approach reflects a more positivist view of knowledge, where the assumption is that truth can be discovered through careful measurement and comparison.

Meta-synthesis, however, comes from the qualitative research tradition, which is shaped by a constructivist understanding of knowledge. Instead of focusing on measurable outcomes, qualitative researchers explore how people experience events, how they give meaning to situations, and how social contexts shape behavior. A meta-synthesis brings together insights from multiple qualitative studies and interprets them in a way that goes beyond a simple summary. The goal is to create a deeper conceptual understanding or develop new theoretical interpretations (Sandelowski & Barroso, 2007). Rather than trying to reduce studies into one conclusion, the process values complexity and aims to preserve the richness of participants’ voices.

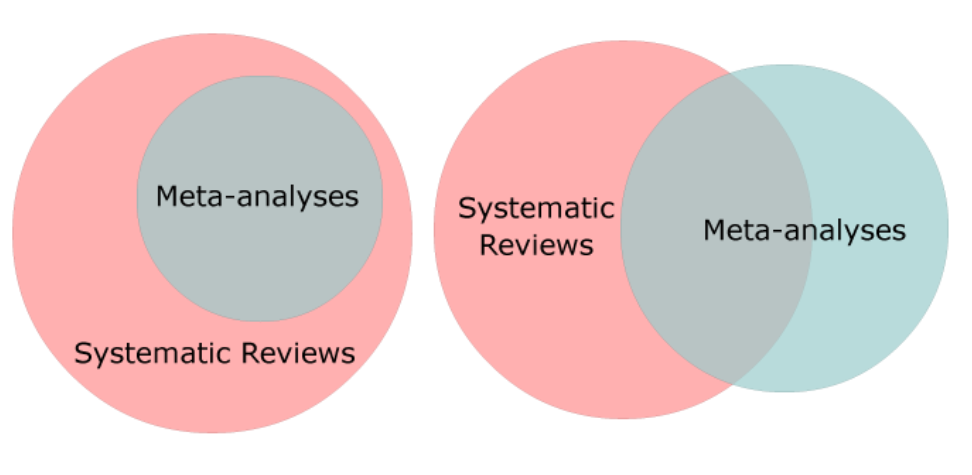

How Each Approach Treats Variation

The philosophical foundations also shape how each method treats variation among studies. In systematic reviews, especially those that include statistical meta-analyses, differences between studies are often treated as something to measure and manage. Heterogeneity is quantified so researchers can determine whether studies are similar enough to be combined meaningfully. In meta-synthesis, variation is not a problem to be controlled; it is a source of insight. Differences in context, perspective, or participant experience often reveal important nuances that help shape the final interpretation.

Even the language used within each method shows how distinct they are. Systematic reviews often rely on terms like “risk of bias,” “effect size,” or “level of evidence.” Meta-synthesis uses terms such as “themes,” “interpretive integration,” and “translation across studies.” These are not just stylistic differences; they reflect fundamentally different goals and ways of understanding the world.

By laying out these conceptual foundations, it becomes easier to appreciate why confusing these two approaches can lead to weak or inappropriate conclusions. Each method is grounded in a different way of knowing, and understanding its philosophical roots helps researchers select the one that genuinely fits their question, instead of forcing a method simply because it is widely used. Systematic reviews reflect a positivist, evidence-based approach aimed at measuring and comparing outcomes across studies. Meta-syntheses emerge from constructivist qualitative research and focus on interpreting experiences, meanings, and social contexts. Their treatment of variation, their language, and their assumptions about what counts as knowledge diverge sharply. Understanding these foundational differences helps researchers choose the method that truly aligns with their research question, rather than treating the approaches as interchangeable.

2. Methodological Structures and Processes: How the Two Approaches Are Executed.

Although systematic reviews and meta-syntheses share the broad goal of making sense of existing research, the way each one works in practice is quite different. These differences aren’t just procedural; they reflect the contrasting purposes and assumptions behind each method. Understanding how each approach unfolds step by step helps clarify why they produce such different types of knowledge.

Systematic Review: Structured, Transparent, and Reproducible

A systematic review usually begins with a tightly framed research question, often built using structures like PICO (Population, Intervention, Comparison, Outcome). This step ensures the question is specific enough to guide the search strategy and screening process. The next phase involves developing a comprehensive search plan. Researchers search multiple databases, hand-check reference lists, and sometimes include gray literature to avoid leaving out relevant studies. Transparency is key here, which is why guidelines like PRISMA emphasize documenting every decision (Moher et al., 2009).

Once studies are identified, the screening process begins. Two or more reviewers typically assess titles and abstracts independently to reduce the chance of bias. After full-text screening, the included studies go through quality appraisal, where tools such as the Cochrane Risk of Bias framework or the Joanna Briggs Institute checklists help researchers evaluate methodological rigor (Higgins et al., 2011). This careful filtering ensures that the final synthesis is built on solid evidence.

If the review includes quantitative data, researchers might conduct a meta-analysis, where statistical techniques are used to combine effect sizes. Even when a meta-analysis isn’t possible, systematic reviews still summarize findings in a structured and transparent way. Their strength lies in consistency and reproducibility. Anyone following the same steps should reach roughly the same conclusion, provided they use the same criteria.

Meta-Synthesis: Interpretive, Flexible, and Conceptually Rich

A meta-synthesis, however, follows a much more interpretive path. Instead of starting with a narrowly defined quantitative or qualitative question about effectiveness, it usually begins with a broader question about experiences, meanings, or processes. The search strategy is still systematic, but it allows for more flexibility, since qualitative studies use diverse terminology and don’t always fit neatly into controlled vocabulary systems (Barnett-Page & Thomas, 2009).

After selecting studies, researchers read them closely, often multiple times, to become familiar with the themes and interpretations the original authors developed. The process is less about filtering out studies with “weak designs” and more about understanding how each study contributes to a bigger picture. Quality appraisal still matters, but the criteria focus on credibility, richness, and transparency rather than statistical rigor (Dixon-Woods et al., 2007).

The Interpretive Heart of Meta-Synthesis

The heart of a meta-synthesis is the interpretive stage. Depending on the chosen approach, meta-ethnography, thematic synthesis, or meta-aggregation, the researcher begins translating findings across studies. In meta-ethnography, for example, researchers compare concepts from one study to another through a process called “reciprocal translation,” looking for ways the ideas speak to each other (Noblit & Hare, 1988). The aim is not to reduce everything into a single theme but to build a new, richer interpretation that goes beyond what any individual study reported.

Some meta-synthesis approaches, like thematic synthesis, use structured coding techniques similar to thematic analysis. Researchers extract themes from each study, compare them, and build higher-level themes that reflect shared meanings across the dataset (Thomas & Harden, 2008). Other approaches, such as meta-aggregation, focus on summarizing qualitative findings in a way that supports practical decision-making without imposing new interpretations (Lockwood et al., 2015). Whatever the technique, meta-synthesis treats the studies themselves as data, drawing out interpretations that are grounded yet expansive.

To summarize, this section shows that systematic reviews and meta-syntheses follow fundamentally different methodological pathways. Systematic reviews emphasize precision, transparency, and reproducibility, using structured search strategies, strict screening procedures, and quality appraisal tools to arrive at consistent evidence-based conclusions. Meta-syntheses, in contrast, rely on interpretive engagement with qualitative studies, valuing depth, contextual meaning, and conceptual insight over standardization. Understanding these procedural differences sets the stage for the next section on comparing Outputs and Deliverables, where the focus shifts from how the methods are executed to what they ultimately produce and how their outputs serve different research goals.

3. Depth of Insight vs. Breadth of Evidence: Comparing Outputs and Deliverables

One of the clearest differences between systematic reviews and meta-syntheses becomes visible when you look at the type of output each method produces. Even when both approaches are carried out rigorously, the final results look nothing alike. This is because each method aims to answer a different kind of question, and that aim naturally shapes the form of the deliverables.

Systematic Review Outputs

A systematic review produces a broad, structured overview of existing evidence. Its strength lies in its ability to gather large amounts of information, filter it through explicit criteria, and present a balanced picture of what the research community currently knows about a specific research question. This usually means identifying patterns across studies, weighing the quality of evidence, and summarizing the conclusions. When a review includes a meta-analysis, the output might even condense findings into a single statistic that represents an average effect size. Forest plots, funnel plots, and summary tables are common tools because they help readers visually interpret the direction and strength of the evidence (Higgins et al., 2019). The final product often answers questions like “Does this intervention work?” or “How consistent is the evidence?”

Because systematic reviews prioritize breadth, they allow decision-makers to rely on a comprehensive evidence base rather than a handful of individual studies. This is especially important in fields such as medicine, public health, and education, where policies and guidelines depend on knowing how well something works across different settings. As Gough et al. (2017) note, systematic reviews are built to support decisions that require high-level certainty, making them essential tools for policy development and clinical guidance.

Meta-Synthesis Outputs

A meta-synthesis, however, produces something much different. Instead of summarizing outcomes, it dives deeply into the meanings and interpretations found across qualitative studies. Rather than focusing on how many studies show a particular result, meta-synthesis examines how participants experience a phenomenon and how researchers have interpreted those experiences. The output may take the form of themes, conceptual models, interpretive narratives, or even new theoretical insights that were not apparent in any single study (Sandelowski & Barroso, 2007).

Where a systematic review might present a table of effect sizes, a meta-synthesis might map out a model showing how people cope with a chronic illness or how students navigate a major educational transition. These interpretations highlight nuance, contradictions, and contextual factors that quantitative approaches usually cannot capture. Noblit and Hare (1988), the early pioneers of meta-ethnography, described this as “bringing together” the interpretations of different authors to create a new, expanded understanding.

Variation as Statistical Noise vs. Variation as Interpretive Richness

Another important contrast lies in the way each method treats variation. In systematic reviews, variation, also known as heterogeneity, is something to measure and interpret statistically. High heterogeneity can signal differences in study design or population that might affect the pooled results (Borenstein et al., 2021). In meta-synthesis, variation is not a statistical concern; it is an interpretive resource. Differences in perspectives or contexts often help researchers understand the broader range of experiences within a phenomenon. Instead of treating variation as noise, meta-synthesis uses it to build richer interpretations.

The outputs of each method also differ in how they guide decision-making. A systematic review might conclude with graded recommendations or strength-of-evidence ratings. A meta-synthesis might produce a conceptual framework that helps researchers or practitioners think differently about a problem. Both are valuable, but they serve different needs.

Understanding these distinctions helps researchers choose the right method for their goal. If the aim is to know whether a program or intervention is effective, a systematic review provides the broad, structured evidence needed for confident conclusions. If the aim is to understand how people experience an issue, why they behave in certain ways, or what social factors shape their decisions, a meta-synthesis offers the depth and insight necessary for meaningful understanding. Recognizing these differing deliverables helps clarify why the choice between the two methods depends on the nature of the research question. This distinction naturally leads to the next section on emerging methodological trends, where the focus shifts from comparing established approaches to examining how new tools, hybrid methods, and evolving research needs are reshaping the landscape of evidence synthesis.

4. Emerging Methodological Trends: Where the Field Is Evolving

Research synthesis has changed rapidly over the past decade. As both the volume of published studies and the complexity of research questions grow, scholars have been forced to rethink how evidence is gathered, interpreted, and presented. These shifts have led to several emerging trends that shape how systematic reviews and meta-syntheses are being used today. Understanding these developments helps researchers choose methods that are not only rigorous but also responsive to modern challenges.

Mixed-Methods Reviews

One of the most noticeable trends is the rise of mixed-methods systematic reviews, where qualitative and quantitative evidence are integrated within the same review. These reviews acknowledge that some questions require both numerical data and explanations of context or experience. For example, a review exploring the effectiveness of a treatment might also incorporate patient perspectives to explain why certain interventions work better for some groups than others. Harden and Thomas (2010) note that combining these approaches helps bridge the gap between “what works” and “what it means,” allowing for a more rounded understanding of complex interventions. This integration has encouraged researchers to blend meta-analysis with thematic synthesis or meta-ethnography, something that would have been unusual two decades ago.

Automation and AI: Technology Accelerating Review Processes

Another major shift is the increasing use of automation and artificial intelligence to manage the overwhelming amount of published literature. Screening thousands of studies manually can take months, which is why tools that assist with citation screening, de-duplication, and text mining are gaining popularity. These tools, such as machine learning-assisted screening, do not replace human judgment, but they speed up the early stages of the review process significantly. According to O’Mara-Eves et al. (2015), automation tools can reduce screening workload by as much as 50%, freeing researchers to focus on interpretation and synthesis. The same trend is appearing in qualitative synthesis, where text-mining techniques are beginning to support early coding or study identification, though full automation remains far from feasible due to the interpretive nature of qualitative work.

Improving Transparency

There is also growing interest in developing clearer reporting standards for qualitative synthesis, similar to what PRISMA has done for systematic reviews. Historically, qualitative synthesis methods lacked standardized reporting guidelines, which sometimes made it difficult to assess the transparency or rigor of a meta-synthesis. Newer frameworks, such as ENTREQ (Enhancing Transparency in Reporting the Synthesis of Qualitative Research), aim to improve clarity and consistency without limiting the interpretive freedom that qualitative synthesis requires (Tong et al., 2012). These efforts reflect a broader shift toward accountability and reproducibility across all forms of evidence synthesis.

Expanding Participation

Finally, the field has seen a shift toward greater user engagement, where practitioners, policymakers, and community members are involved in shaping the scope and direction of a review. This approach is common in health and social research, where stakeholder involvement ensures that the final synthesis answers questions that matter in real-world practice. Pollock et al. (2019) argue that involving users can enhance both the credibility and usefulness of evidence, particularly when working with vulnerable populations or ethically sensitive topics.

Together, these trends show that research synthesis is becoming more flexible, more collaborative, and more technologically supported. Rather than relying on rigid boundaries between methods, researchers are adopting approaches that accommodate the complexity of modern evidence. This evolution does not blur the line between systematic reviews and meta-syntheses; instead, it highlights how each method can adapt and contribute to a broader, more dynamic evidence ecosystem. These emerging trends also prepare the groundwork for examining how researchers can apply these evolving methods in practice. As we move forward, the next section can explore how these methodological shifts influence real-world research design, application, or evaluation, helping scholars and practitioners decide which synthesis approach best fits the challenges they face today.

5. Choosing the Right Approach: Practical Decision Framework for Researchers

With so many synthesis methods available today, choosing the right one can feel overwhelming. Yet the choice between a systematic review and a meta-synthesis is not as complicated as it might seem. It simply requires researchers to be honest about the purpose of their study, the type of data they have, and the kind of answer they hope to produce. A clear decision framework can help researchers avoid forcing a method onto a question that does not fit.

Using the Research Question as the Primary Compass

The starting point is always the research question. This is the most reliable guide. If the question focuses on effectiveness, impact, prevalence, or measurable outcomes, then a systematic review is usually the best fit. These kinds of questions require structured comparisons, standardized criteria, and a way to assess the strength of evidence across many studies. As Petticrew and Roberts (2006) emphasize, systematic reviews are designed for questions where “what works, how well, and for whom” are central concerns. In fields like medicine or public health, these questions often guide policy or clinical decisions, which makes the rigor and transparency of systematic reviews especially important.

On the other hand, if the aim is to understand lived experiences, meanings, emotions, or social processes, a meta-synthesis is far more appropriate. These questions require depth rather than breadth, answers that capture the richness of human experience rather than numerical summaries. Meta-synthesis allows researchers to explore patterns of meaning across different qualitative studies, helping them build new conceptual understandings. According to Thorne (2016), qualitative syntheses are particularly useful when researchers want to inform practice by making sense of the complexities that shape human behavior.

Allowing the Data to Guide the Method

A second consideration is the type of evidence available. If most of the existing studies are quantitative, use standardized measurements, or report effect sizes, then the structure of a systematic review aligns well with that body of evidence. If the literature is dominated by interview-based or observational studies, where findings are presented as themes or narratives, meta-synthesis is the natural fit. Trying to force qualitative studies into a rigid, quantitative format usually leads to shallow conclusions. Likewise, treating quantitative studies as though they are narrative reflections strips away the detail that makes them useful. Good synthesis always respects the nature of the available evidence.

Managing Variation

The next factor involves study heterogeneity, or the degree of difference among studies. Systematic reviews can manage variation, but only up to a point. When studies use fundamentally different research designs, populations, or outcome measures, a statistical meta-analysis may not be meaningful. In these cases, a narrative systematic review might still work, but it requires careful judgment. In contrast, heterogeneity is expected, and even welcomed, in meta-synthesis. Differences between studies can reveal important insights about context, identity, or experience. As Major and Savin-Baden (2010) note, qualitative synthesis treats variation not as a barrier but as a window into the complexity of human life.

Practical constraints also matter. Systematic reviews are typically more time-consuming, often requiring multiple reviewers, extensive screening, and detailed documentation. They demand strong familiarity with search strategies, appraisal tools, and reporting guidelines. Meta-synthesis requires deep interpretive skills, comfort with ambiguity, and the ability to handle multiple layers of meaning. Neither method is “easier”; they simply require different strengths. Researchers should consider their own expertise and the resources available, including time, collaborators, and access to methodological guidance.

Ethical Alignment Between Topic and Method

Ethical considerations play a role as well. When working with vulnerable populations or sensitive topics, qualitative synthesis can provide insights that respect context and complexity, avoiding the reductionism that sometimes comes with numerical approaches. At the same time, systematic reviews may be necessary when policy decisions depend on measurable evidence that affects public welfare. As Mays, Pope, and Popay (2005) suggest, choosing a method is partly about ensuring that the synthesis honors the subject matter and the voices represented within it.

To support decision-making, it can be helpful to use a simple guiding set of questions:

- What type of question am I trying to answer, effectiveness or experience?

- What type of data dominates the existing literature?

- Am I seeking clarity, generalizability, or deeper understanding?

- How much heterogeneity exists in the studies?

- What resources and expertise do I have?

- What ethical considerations might shape the approach?

Clear answers to these questions usually point toward the right method. And in cases where the research question is broad or multifaceted, it may even be appropriate to combine approaches through a mixed-methods review. These hybrids have become more common in health and social research because they acknowledge that real-world problems often require both measurable outcomes and meaningful explanations.

In the end, choosing between a systematic review and a meta-synthesis is less about following trends and more about aligning method with purpose. When the method fits the question, the synthesis becomes more credible, useful, and relevant, qualities that matter not only to researchers but to the communities and decision-makers who rely on evidence to guide practice. This decision-making framework leads naturally into the Conclusion, where the overarching insights across all chapters come together to clarify how synthesis methods can be used thoughtfully, responsibly, and effectively.

Conclusion

As research synthesis continues to evolve, the divide between systematic reviews and meta-syntheses becomes easier to understand and more important to respect. These methods were never meant to compete with each other. Instead, they serve different purposes, answer different types of questions, and offer different kinds of insight. Recognizing these distinctions helps researchers avoid the temptation to treat all review methods as interchangeable or to select an approach simply because it is more familiar.

As the world of evidence synthesis continues to shift, choosing the right method, whether a broad systematic review or an interpretive qualitative meta-synthesis, determines the strength and clarity of your research outcomes. Researchers today must balance depth, context, and methodological rigor, especially when working within the complex demands of qualitative research and qualitative analysis.

If you’re preparing a thesis or working through the challenges of a literature review, you do not have to navigate this alone. Our team offers personalized dissertation help, expert dissertation consulting, and hands-on guidance from an experienced dissertation coach who understands both methodological nuance and academic expectations. Whether you’re synthesizing quantitative evidence or integrating qualitative insights, we provide tailored dissertation assistance and specialized help with dissertation tasks that often feel overwhelming.

This is the moment to strengthen your research with professional support. Our comprehensive dissertation services and dedicated dissertation help service ensure that your methods are sound, your writing is clear, and your final submission is academically solid. Reach out today and let us help you complete your project with confidence and clarity.

References

Glasziou, P., & Chalmers, I. (2018). Research waste is still a scandal, an essay by Paul Glasziou and Iain Chalmers. BMJ, 363, k4645.

Higgins, J. P. T., & Thomas, J. (2022). Cochrane Handbook for Systematic Reviews of Interventions (2nd ed.). Wiley.

Noblit, G. W., & Hare, R. D. (1988). Meta-Ethnography: Synthesizing Qualitative Studies. Sage.

Moher, D., Liberati, A., Tetzlaff, J., Altman, D. G., & The PRISMA Group. (2009). Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement. PLoS Medicine, 6(7), e1000097.

Sandelowski, M., & Barroso, J. (2007). Handbook for synthesizing qualitative research. Springer Publishing.

Thomas, J., & Harden, A. (2008). Methods for the thematic synthesis of qualitative research in systematic reviews. BMC Medical Research Methodology, 8, 45.

Barnett-Page, E., & Thomas, J. (2009). Methods for the synthesis of qualitative research: A critical review. BMC Medical Research Methodology, 9, 59.

Dixon-Woods, M., Booth, A., & Sutton, A. J. (2007). Synthesising qualitative research: A review of published reports. Qualitative Research, 7(3), 375–422.

Higgins, J. P. T., Altman, D. G., & Sterne, J. A. C. (Eds.). (2011). Cochrane handbook for systematic reviews of interventions (Version 5.1.0). The Cochrane Collaboration.

Lockwood, C., Porritt, K., Munn, Z., Rittenmeyer, L., Salmond, S., Bjerrum, M., … & Aromataris, E. (2015). Chapter 2: Systematic reviews of qualitative evidence. In E. Aromataris & Z. Munn (Eds.), Joanna Briggs Institute Reviewer’s Manual. JBI.

Moher, D., Liberati, A., Tetzlaff, J., Altman, D. G., & The PRISMA Group. (2009). Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement. PLoS Medicine, 6(7), e1000097.

Noblit, G. W., & Hare, R. D. (1988). Meta-ethnography: Synthesizing qualitative studies. Sage.

Thomas, J., & Harden, A. (2008). Methods for the thematic synthesis of qualitative research in systematic reviews. BMC Medical Research Methodology, 8, 45.

Borenstein, M., Hedges, L. V., Higgins, J. P. T., & Rothstein, H. R. (2021). Introduction to meta-analysis (2nd ed.). Wiley.

Gough, D., Oliver, S., & Thomas, J. (2017). An introduction to systematic reviews (2nd ed.). Sage.

Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J., & Welch, V. A. (Eds.). (2019). Cochrane handbook for systematic reviews of interventions (2nd ed.). Wiley.

Noblit, G. W., & Hare, R. D. (1988). Meta-ethnography: Synthesizing qualitative studies. Sage.

Sandelowski, M., & Barroso, J. (2007). Handbook for synthesizing qualitative research. Springer Publishing.

Harden, A., & Thomas, J. (2010). Mixed methods and systematic reviews: Examples and emerging issues. In A. Tashakkori & C. Teddlie (Eds.), Sage handbook of mixed methods in social & behavioral research (2nd ed., pp. 749–774). Sage.

O’Mara-Eves, A., Thomas, J., McNaught, J., Miwa, M., & Ananiadou, S. (2015). Using text mining for study identification in systematic reviews: A systematic review of current approaches. Systematic Reviews, 4(1), 1–22.

Pawson, R., Greenhalgh, T., Harvey, G., & Walshe, K. (2005). Realist review, A new method of systematic review designed for complex policy interventions. Journal of Health Services Research & Policy, 10(Suppl 1), 21–34.

Pollock, A., Campbell, P., Struthers, C., Synnot, A., McGill, K., Torrance, N., … & Nunn, J. (2019). Stakeholder involvement in systematic reviews: A scoping review. Systematic Reviews, 8, 1–17.

Tong, A., Flemming, K., McInnes, E., Oliver, S., & Craig, J. (2012). Enhancing transparency in reporting the synthesis of qualitative research (ENTREQ). BMC Medical Research Methodology, 12, 181.

Major, C. H., & Savin-Baden, M. (2010). An introduction to qualitative research synthesis: Managing the information explosion in social science research. Routledge.

Mays, N., Pope, C., & Popay, J. (2005). Systematically reviewing qualitative and quantitative evidence to inform management and policy-making in the health field. Journal of Health Services Research & Policy, 10(Suppl 1), 6–20.

Petticrew, M., & Roberts, H. (2006). Systematic reviews in the social sciences: A practical guide. Blackwell Publishing.

Thorne, S. (2016). Interpretive description: Qualitative research for applied practice (2nd ed.). Routledge.

Gough, D., Oliver, S., & Thomas, J. (2017). An introduction to systematic reviews (2nd ed.). Sage.

Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J., & Welch, V. A. (Eds.). (2019). Cochrane handbook for systematic reviews of interventions (2nd ed.). Wiley.

Sandelowski, M., & Barroso, J. (2007). Handbook for synthesizing qualitative research. Springer Publishing.